Go to Settings -> Audio & Video, choose avatarify (Linux), CamTwist (Mac) or OBS-Camera (Windows) camera. Here are a few examples how to configure particular app to use Avatarify. Configure video meeting appĪvatarify supports any video-conferencing app where video input source can be changed (Zoom, Skype, Hangouts, Slack. You don't need to be exact, and some other configurations can yield better results still, but it's usually a good starting point. When you are done, press 'F' again to exit reference frame search mode. You want to get the first number as small as possible - around 10 is usually a good alignment. You will see two numbers displayed as well: the first number is how closely you are currently aligned to the avatar, and the second number is how closely the reference frame is aligned. This will slow down the framerate, but while this is happening, you can keep moving your head around: the preview window will flash green when it finds your facial pose is a closer match to the avatar than the one it is currently using.

Use the image overlay function (Z/C keys) or the face detection overlay function (O key) to match your and avatar's face expressions as close as possibleĪlternatively, you can hit 'F' for the software to attempt to find a better reference frame itself.When you have aligned, hit 'X' to use this frame as reference to drive the rest of the animation Use zoom in/out function (W/S keys) and camera left, right, up, down translation (U/H/J/K keys). Align your face in the camera window as closely as possible in proportion and position to the target avatar.These are the main principles for driving your avatar: H - left, K - right, U - up, J - Down by 5 pixels.

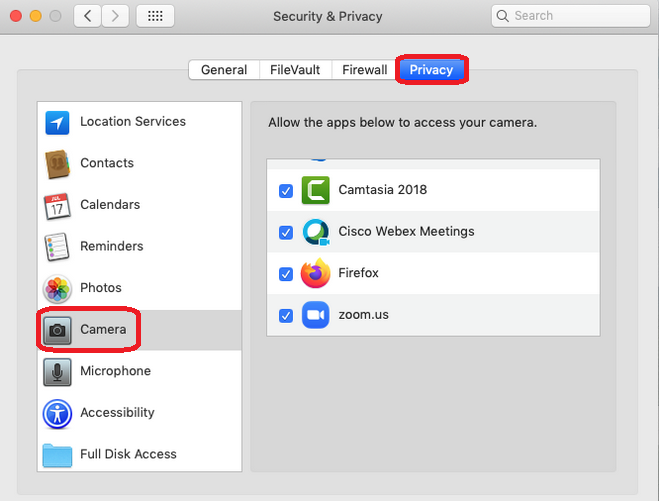

Every time you push the button – new avatar is sampled. These will immediately switch between the first 9 avatars. Note: To reduce video latency, in OBS Studio right click on the preview window and uncheck Enable Preview. Please follow these recommendations to drive your avatars. The cam window is for controlling your face position and avatarify is for the avatar animation preview. It should automaitcally start streaming video from Avatarify to OBS-Camera.Ĭam and avatarify windows will pop-up. Clone avatarify-python and install its dependencies (v4l2loopback kernel module):.For using the gpu (hardly recommended): Install nvidia drivers and nvidia docker.Then run this step to make docker available for your user. Install Docker following the Documentation.Dockerĭocker images are only availabe on Linux. The server and client software are native and dockerized available. You can offload the heavy work to Google Colab or a server with a GPU and use your laptop just to communicate the video stream. The steps 10-11 are required only once during setup. Now OBS-Camera camera should be available in Zoom (or other videoconferencing software).Check AutoStart, set Buffered Frames to 0 and press Start. In OBS Studio, go to Tools -> VirtualCam.Then select Edit -> Transform -> Fit to screen. In the appeared window, choose ": avatarify" in Window drop-down menu and press OK. In the Sources section, press on Add button ("+" sign), select Windows Capture and press OK.Choose Install and register only 1 virtual camera. Install OBS Studio for capturing Avatarify output.Leave these windows open for the next installation steps. If installation was successful, two windows "cam" and "avatarify" will appear. Download network weights and place file in the avatarify-python directory (don't unpack it).Download Miniconda Python 3.7 and install using command:.Linux uses v4l2loopback to create virtual camera. Of course, you also need a webcam! Install Download network weightsĭownload model's weights from here or here or here Linux There are no special PC requirements for this mode, only a stable internet connection. You can also run Avatarify remotely on Google Colab (easy) or on a dedicated server with a GPU (harder). GeForce GTX 1080 Ti: 33 frames per second.These are performance metrics for some hardware: Otherwise it will fallback to the central processor and run very slowly. To run Avatarify locally you need a CUDA-enabled (NVIDIA) video card. You can run Avatarify in two modes: locally and remotely. ▶️ AI-generated Elon Musk Table of Contents

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed